TL;DR (for the busy execs)

- Migrated an existing Postgres data warehouse to a highly scalable Snowflake architecture.

- Standardized ingestion (Meltano/Python API) and transformation (dbt) processes.

- Implemented a robust Role-Based Access Control (RBAC) security model in Snowflake.

- Empowered the existing Data/ML team by removing infrastructure bottlenecks.

- Established a foundational data layer that unlocked true AI readiness.

Context

Kiwi already had a capable Data and Machine Learning team when we began our engagement. However, as the company grew, they were hitting significant scalability limits.

Their existing data warehouse, built on Postgres, was struggling to keep up with growing data volumes and complex analytical queries. Furthermore, the lack of standardized internal processes for data ingestion and modeling created friction. This lack of standardization slowed down the ML team, who had to spend too much time wrestling with data engineering tasks, and hindered downstream teams from easily accessing and using the data they needed.

The mandate was clear: Kiwi needed a scalable, secure, and standardized data foundation that could support their current operations and their future ML + AI ambitions.

What we built

We designed and implemented a complete modern data architecture, moving them from a monolithic Postgres setup to a decoupled, scalable cloud data platform.

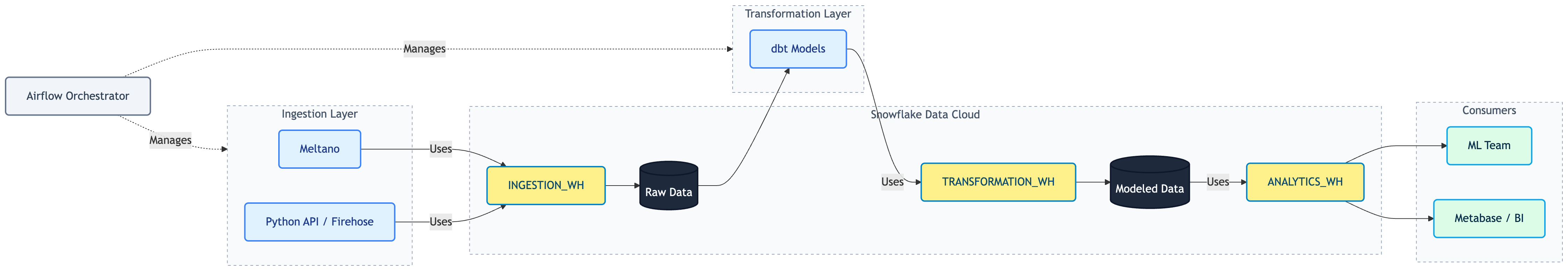

At a high level, the architecture included:

- Infrastructure as Code: We used Terraform to deploy and manage AWS and Snowflake resources, ensuring the platform was reproducible and easy to evolve.

- Ingestion: We set up automated ingestion using Meltano for standard sources and custom Python APIs (via AWS Lambda and Firehose) for specialized real time data streams.

- Storage & Compute: We migrated the data warehouse to Snowflake, setting up dedicated warehouses to isolate workloads and ensure consistent performance.

- Transformation: We introduced dbt for reliable, version-controlled data modeling.

- Orchestration: We deployed Apache Airflow to orchestrate the entire data pipeline reliably.

The hard part: standardizing processes and securing the foundation

Moving from a monolithic Postgres database to a decoupled architecture requires discipline. The technology change is only half the battle; the other half is changing how the team works with data.

We worked closely with the Kiwi team to standardize how data was ingested and modeled. By establishing clear conventions and using dbt, we ensured that downstream teams could easily consume and understand the data.

Another critical challenge was security and controlled access to sensitive data. In a growing organization, it's essential that people and services only see the information appropriate for their roles. To address this, we set up granular controls for access to Personally Identifiable Information (PII). For example, while everyone who needs it can query the dim_users table for analysis, only users with approved roles can view sensitive fields like email addresses or other PII. This approach meant we could safely open up more data for broader consumption (unlocking self-serve capabilities) while keeping strict governance over sensitive information.

Enabling the ML Team and AI Readiness

Machine learning models are only as good as the data feeding them. Before our engagement, Kiwi's ML team was bogged down by infrastructure limitations and inconsistent data.

By fixing the foundational data layer, we removed the data engineering burden from the ML team. With clean, documented, and accessible data pipelines in place, their infrastructure transformed from just "storing data" to being truly "AI-ready." The ML team could now trust the data they were pulling and focus their energy on building and refining models, rather than troubleshooting pipelines.

What this unlocks for the business

The impact of a scalable data foundation extends far beyond the data team:

- Focus on High-Value Work: The ML team can now focus on building models instead of wrestling with database performance and data engineering tasks.

- Self-Serve Analytics: Downstream teams have reliable, self-serve access to modeled data, reducing their dependence on the data team for basic reporting.

- Future-Proof Scale: The platform is secure by design and can scale infinitely to support future AI initiatives and growing data volumes.

Final thought

AI initiatives often fail not because of poor algorithms, but because of a weak data foundation. You cannot build reliable machine learning models on top of a fragile, unscalable data warehouse.

In Kiwi's case, the win was building a foundation that matched the ambition of their ML team: reliable infrastructure, standardized processes, clear security models, and a platform ready for the future of AI.