TL;DR (for the busy execs)

- Built Takenos' modern data foundation from the ground up in roughly two months.

- Unified product, operational, and business data into a governed analytics layer cross-functional teams could use.

- Consolidated transaction and revenue logic into shared models, reducing metric ambiguity across teams.

- Introduced dbt as the transformation workflow and helped the team learn how to maintain it after handoff.

- Added an internal analytics assistant layer so stakeholders could access information in plain language, not only through dashboards or ad hoc requests.

- Gave product, finance, operations, and growth a common language for decision-making instead of fragmented reporting.

Context

Takenos is building a borderless financial product for people and businesses moving money across Latin America and beyond. As that kind of product grows, the data challenge grows with it: more touchpoints, more operational complexity, more questions from more teams, and a much higher cost of getting metrics wrong.

That was the real mandate behind this engagement. The goal was not just to "set up a stack." It was to give Takenos a reliable analytics foundation that could support product decisions, commercial visibility, and internal alignment as the company scaled.

In about two months, we worked with the team to build that foundation from scratch.

How we delivered it

One thing we care a lot about in projects like this is making the work visible and collaborative from day one. This was not a long black-box implementation with a reveal at the end. We worked in close weekly cycles with the Takenos team, sharing progress, adapting scope as we learned more, and keeping the implementation connected to the people who would eventually own it.

We also ran three workshops across different stages of the rollout so the team could understand the architecture, the modeling approach, and the operational workflow around dbt and the platform. That combination of weekly updates, hands-on collaboration, and structured knowledge transfer made it easier to move quickly without losing alignment.

Something that made a real difference was the in-person dynamic. While the engagement was not fully on-site, a good part of the work happened face to face with the Takenos team. That presentiality helped build trust faster, shortened feedback loops, and made it easier to resolve ambiguity on the spot instead of letting it stall in async threads. In our experience, that kind of proximity is one of the things that helps projects like this move at the pace they did.

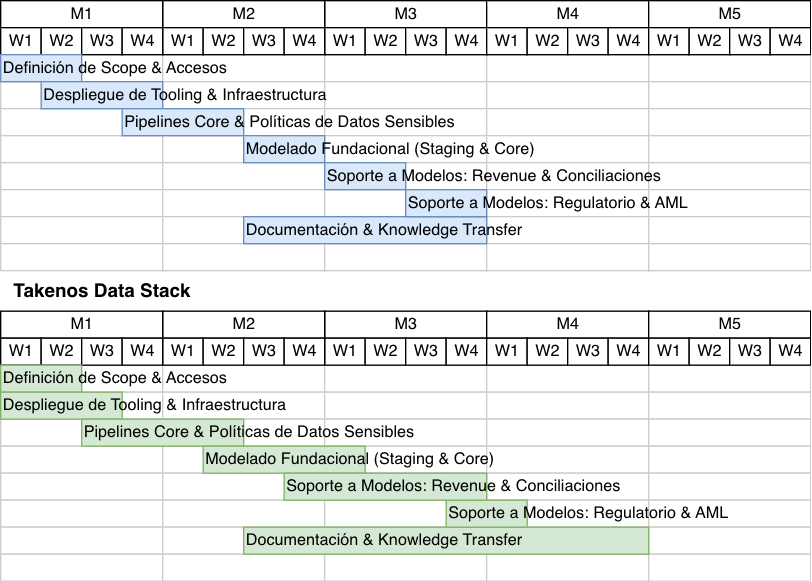

The timeline below shows the original plan against the actual execution path. The important point is not just speed. It is that the project stayed tightly coordinated while the work evolved with the team's needs.

What we built

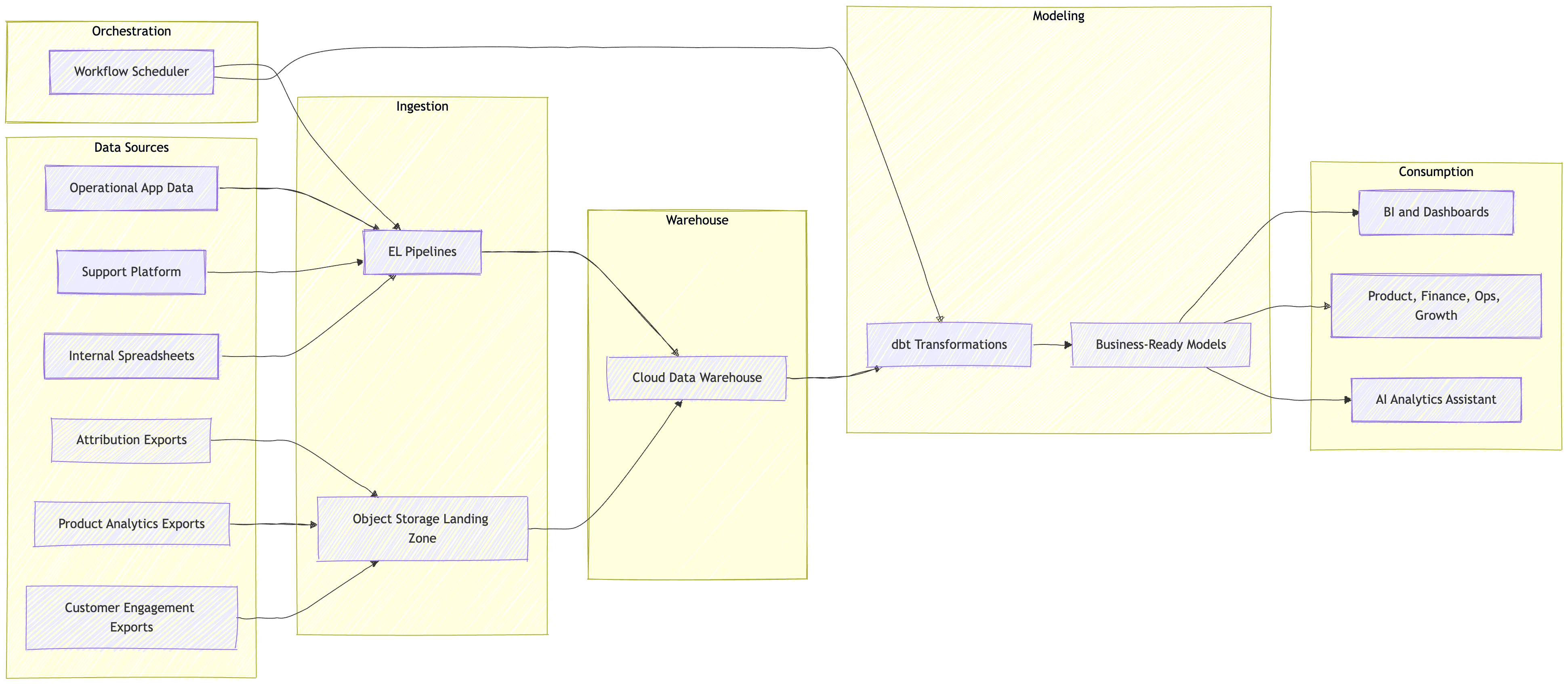

We designed and implemented a complete modern data architecture with a clear operating model behind it.

At a high level, that meant:

- Infrastructure managed as code so the platform could be reproducible and easier to evolve.

- Automated ingestion and orchestration to move source data into a warehouse reliably.

- A dbt-based transformation layer to turn raw source tables into trusted business models.

- A layered modeling approach so teams could move from source-level detail to decision-ready metrics without losing traceability.

- An internal analytics assistant that helped democratize access to information without requiring broad SQL access across the company.

The most important part was not the tooling itself. It was the shape of the models and the discipline around them.

Working closely with the Takenos team, we helped define a shared analytics layer that connected the signals different parts of the company cared about: product activity, operational workflows, support context, and business performance. That let us move from isolated reporting needs to a single foundation that multiple teams could build on.

We also complemented that foundation with an AI-powered analytics assistant built on agent-blue, designed to let stakeholders ask questions in plain language and get answers grounded in the warehouse and modeled logic. For teams outside the data function, that meant easier access to information without bypassing the governance built into the platform.

At a high level, the architecture looked like this:

The hard part: making metrics mean the same thing everywhere

In fast-growing companies, the main problem is rarely "no data." It is that every function ends up with its own partial version of the truth.

For Takenos, one of the most valuable parts of the project was consolidating transaction and revenue logic into shared models that could be reused across the company. Instead of leaving each team to interpret financial and operational activity in its own way, we created a common layer where the core definitions lived in one place.

That matters more than most teams expect.

When product, finance, operations, and growth are all looking at slightly different numbers, decision-making slows down. Roadmap conversations become debates about definitions. Reporting becomes reactive. Trust erodes quietly.

By centralizing that logic, we helped Takenos create a more stable base for planning, analysis, and day-to-day execution.

Why this mattered for product leaders

For heads of product, a data platform is valuable only when it improves the speed and quality of decisions.

This engagement was designed to do exactly that.

With a governed transformation layer and shared business models in place, product questions stop depending on one-off data pulls or tribal knowledge. Teams can answer questions faster, compare outcomes with more confidence, and spend more time deciding what to do next instead of reconciling conflicting reports.

Just as importantly, the work created a better bridge between product metrics and business metrics. In many companies, those worlds live too far apart. Here, we focused on making them usable together, so the company could connect activity, operations, transactions, and revenue in a more coherent way.

That kind of alignment is especially important in fintech, where product behavior and business performance are tightly linked.

Introducing dbt, not just installing it

A big part of the engagement was helping the team adopt dbt as a maintainable way of working, not as another tool someone external had to manage forever.

We introduced a modeling workflow centered on version control, testing, documentation, and reusable transformations. But we also worked to make sure the team could operate it after us.

That knowledge transfer mattered as much as the implementation itself.

Our goal in projects like this is never to create dependency. It is to help clients stand up a strong analytics foundation, then make sure their team understands how to extend it with confidence.

Making data easier to access

One of the most useful extensions of the project was not another dashboard. It was making trustworthy information easier to reach.

Once the core models and definitions were in place, we could layer in an internal AI assistant so teams could ask questions in natural language and explore answers with less friction. Done well, this is not a replacement for modeling or governance. It is a better access pattern on top of them.

For product leaders, that matters because access is often the real bottleneck. Even when the right data exists, too many answers still depend on a specialist queue. By reducing that gap, Takenos could move closer to a culture where reliable information is easier to consume across the organization.

What this unlocks for the business

- Faster answers to cross-functional questions without rebuilding logic every time.

- More trust in product and business metrics because core definitions live in shared models.

- Better prioritization for product teams working across multiple signals and constraints.

- Less reporting friction between product, finance, operations, and growth.

- Broader access to decision-supporting information through natural-language workflows on top of governed data models.

- A data foundation the internal team can keep evolving instead of a black box they have to work around.

Final thought

The most successful data projects are not the ones with the longest tool list. They are the ones that help a company think more clearly.

In Takenos' case, the win was building a foundation that matched the ambition of the business: reliable infrastructure, better models, clearer metrics, and a team that could keep moving forward with ownership.

If you want to see the kind of outcomes we focus on, start with our proof and how we help product teams.

If your product organization is scaling faster than your analytics foundation, let's talk. We can help you build the layer that makes better decisions possible.